Why Your AI Support Bot Is a Chatbot in Disguise

Pochadri Ariga

CTO & Co-founder · April 7, 2026 · 7 min read

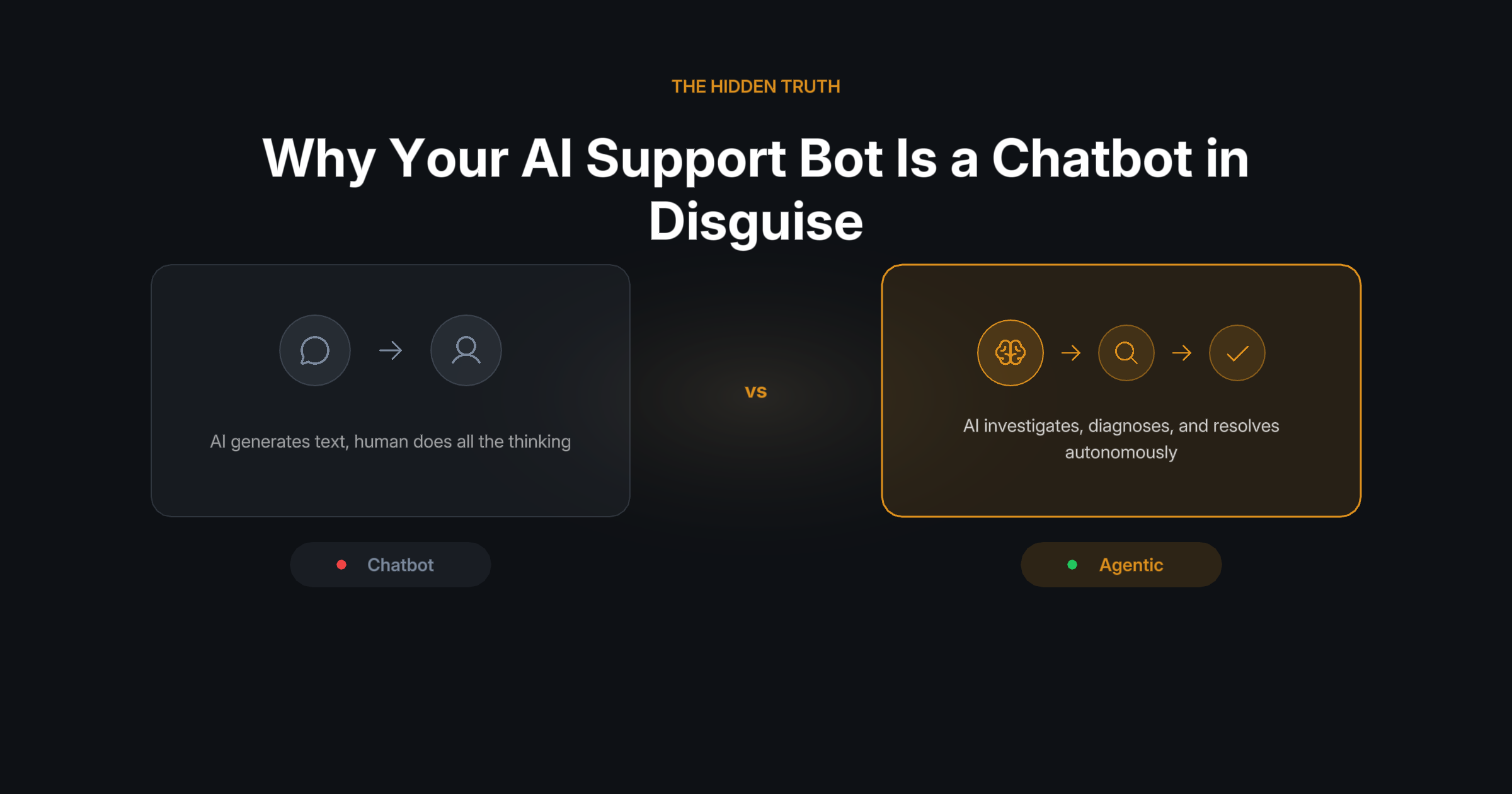

Every enterprise vendor slapped "AI-powered" on their help desk in 2025. But asking an LLM to summarize a ticket is not agentic support. It is autocomplete with extra steps.

The chatbot era is over. Most companies did not get the memo.

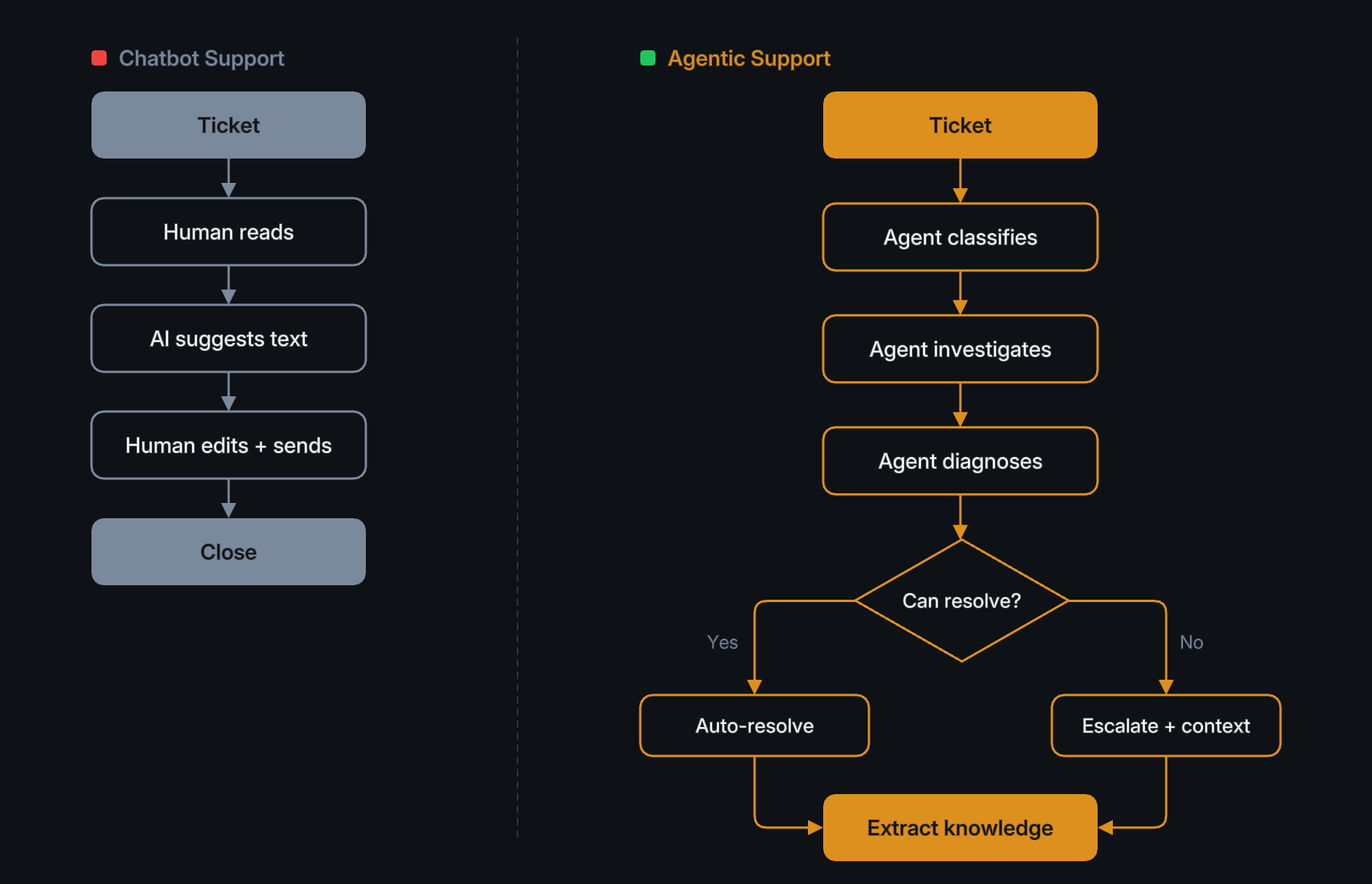

Here is what "AI-powered support" looks like at most companies today:

A customer submits a ticket. A language model reads the ticket, searches a knowledge base, and generates a response that sounds confident but is often wrong. A human agent reviews the response, edits it, and sends it. The AI "helped" by saving 30 seconds of typing.

This is not AI support. This is a chatbot with better grammar.

The pattern is always the same: human receives ticket, AI suggests response, human verifies and sends. The AI is a text generator sitting in the middle of a human workflow. It does not diagnose. It does not investigate. It does not learn. It waits to be asked and produces text.

We call this the Copilot Trap: the AI augments the human typing speed but does not replace any of the human thinking. The support engineer still needs to understand the system, read the logs, check the deployment history, correlate with past incidents, and decide what to do. The AI just makes the final email sound nicer.

What agentic support actually means

Agentic support is fundamentally different. The AI does not assist a human. It IS the first responder.

When a ticket arrives, an AI agent:

- Reads the ticket and extracts the signal from the noise

- Investigates by pulling data from connected systems (logs, deployments, databases, monitoring)

- Correlates with past incidents using a knowledge graph of previously resolved issues

- Diagnoses the root cause, not just the symptoms

- Resolves by taking action (posting a fix, updating config, notifying the right team)

- Learns by extracting new knowledge from the resolution for future incidents

The human only gets involved when the agent is genuinely stuck. Not to review text, but to make decisions the agent cannot make.

The three layers every agentic support system needs

Building agentic support is not about picking a better LLM. It is about building three layers that most "AI support" products do not have.

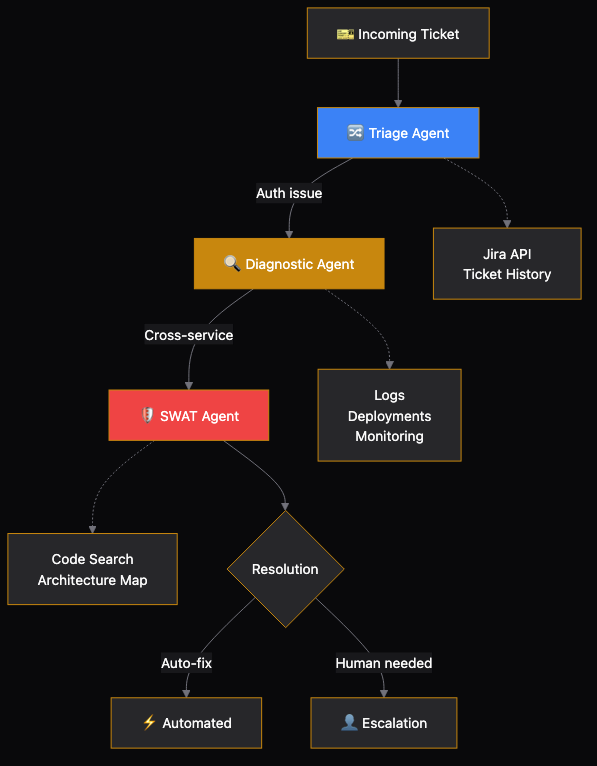

Layer 1: Multi-agent orchestration

A single AI agent cannot handle every type of support request. A billing issue requires different tools, different knowledge, and different authority than a login failure.

Agentic support uses specialized agents organized into solutions. A triage agent reads the ticket and classifies it. A diagnostic agent investigates using connected tools (logs, monitoring, ticket history). The SWAT agent — SWAT stands for Specialized Workflow and Triage — sits at the top of that stack: it is the escalation tier for incidents that need cross-service tracing, code, and production context in one place (for example, a regression tied to a deployment, or a timeout whose root cause lives in another service’s configuration). Each agent has its own system prompt, its own tool access, and its own knowledge scope.

When an agent hits its limit, it escalates to a more specialized agent, passing along everything it learned. This is not a chatbot saying "Let me transfer you." It is a cascade of increasingly capable investigators, each building on the previous one.

Consider a real example: a triage agent reads "I can't process payments" and classifies it as a payment service issue. It escalates to the diagnostic agent, which pulls logs and sees a timeout on the payment gateway. The diagnostic agent cannot trace the failure across service boundaries, so it escalates to the SWAT agent. The SWAT agent has the broadest toolkit — code search, architecture maps, deployment history, and cross-service tracing — and discovers the root cause: a recent deployment changed a connection pool size, causing timeouts under load. It recommends a rollback and posts the diagnosis to the ticket with full evidence.

Each agent in the cascade has progressively broader tool access and deeper knowledge scope. The triage agent sees tickets. The diagnostic agent sees logs and monitoring. The SWAT agent sees everything — code, architecture, deployments — and only runs when the simpler agents cannot close the case.

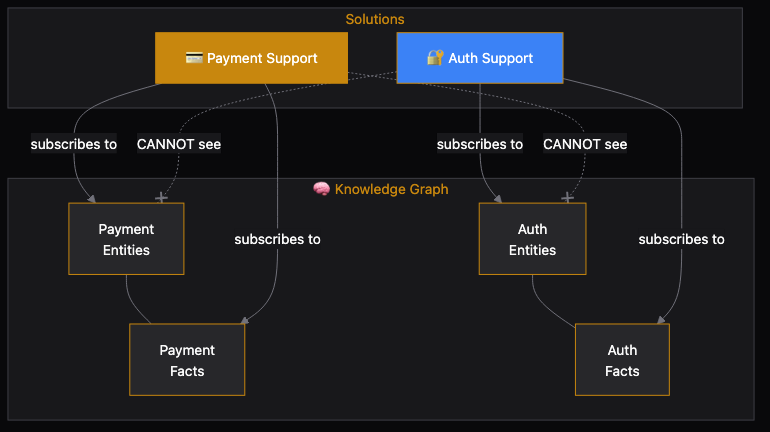

Layer 2: Domain-scoped knowledge

The biggest failure mode of AI support is not wrong answers. It is confidently wrong answers drawn from the wrong context.

If your AI agent has access to every resolved ticket across every product, it will confidently recommend a payments fix for an auth problem because the symptoms looked similar. This is worse than no answer at all, because the human trusts it.

Domain-scoped knowledge solves this. Each solution subscribes to specific knowledge domains. The payments agent sees only payments entities, payments incidents, and payments runbooks. It literally cannot hallucinate answers from the auth domain because that knowledge is never injected into its context.

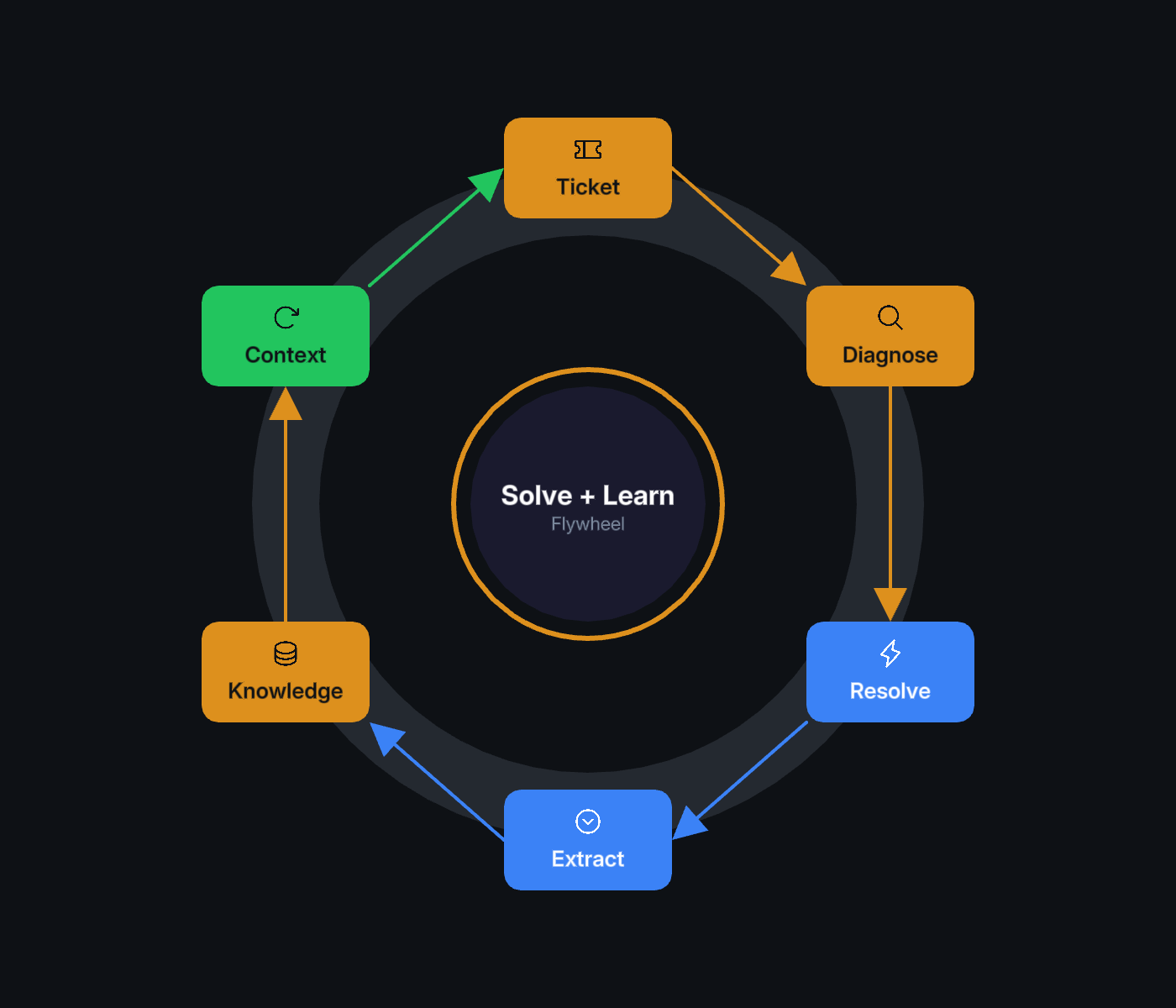

Layer 3: The solve-and-learn loop

This is where agentic support becomes a flywheel, not a tool.

Every time an agent resolves a ticket, the system extracts new knowledge: entities mentioned, relationships discovered, resolution steps that worked. This knowledge goes back into the graph, scoped to the right domain. The next similar ticket gets resolved faster because the agent already knows what worked last time.

Over time, the agent does not just stay as good as it was on day one. It gets better. Every resolved ticket makes future tickets easier. Every escalation teaches the agent what it did not know. Every human correction improves the knowledge graph.

This is the fundamental difference between a chatbot and an agentic system. A chatbot is frozen in time. An agentic system compounds.

How to tell if your "AI support" is actually a chatbot

Ask these five questions about your current system:

- Does the AI investigate, or does it just respond from a knowledge base?

- Does the AI know what happened last time via a knowledge graph, or just keyword search?

- Can the AI act (post comments, update configs, create tickets), or only suggest?

- Does the AI get better over time automatically, or only when someone manually updates the KB?

- Does the AI know its limits and escalate with full context, or always generate a response?

If your system scores "chatbot" on three or more of these, you do not have agentic support. You have a text generator.

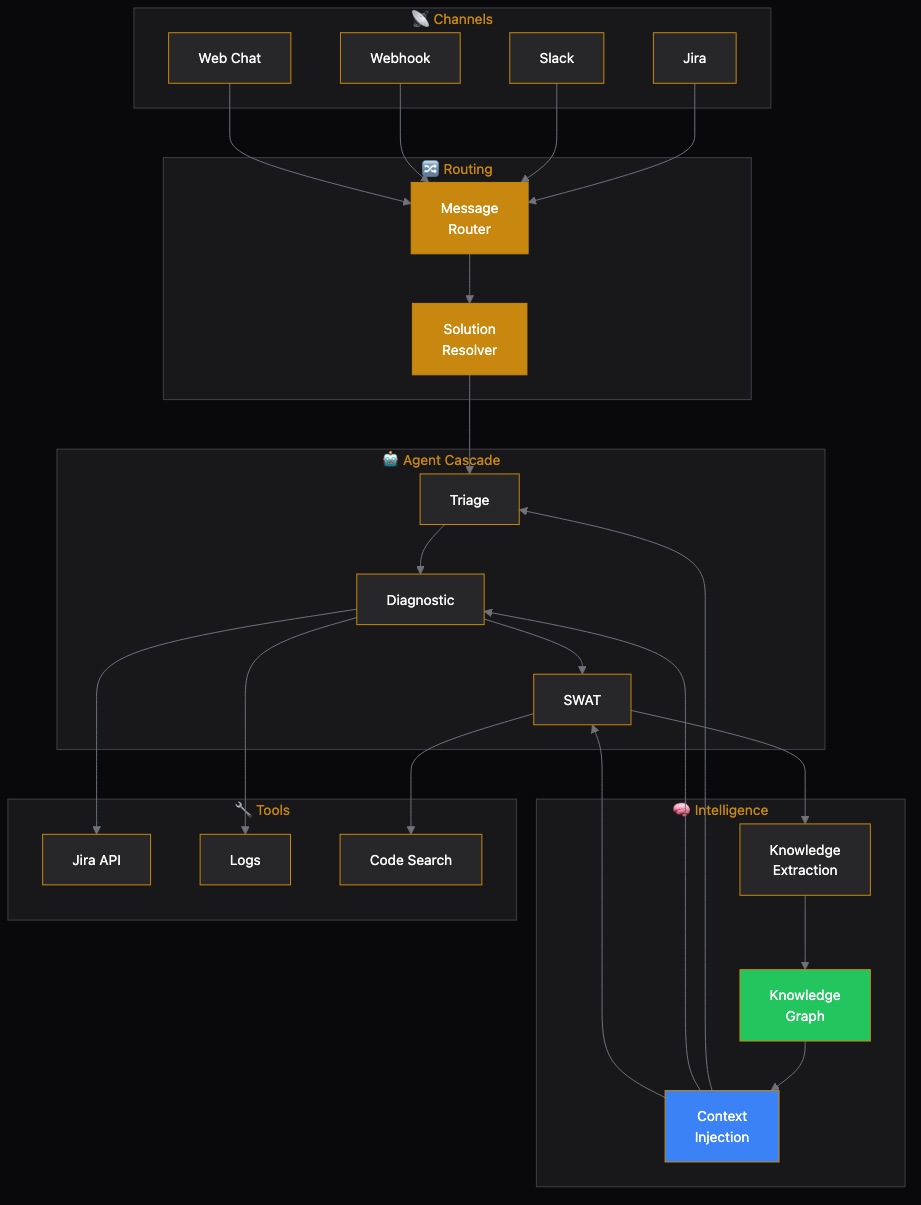

The full architecture

Here is what the full architecture looks like when you put all three layers together:

This is not a feature. It is a system. Each piece reinforces the others: channels bring tickets to the platform, routing ensures the right solution handles each issue, agents investigate and resolve, knowledge gives agents context and learns from every resolution, and tools let agents actually DO things.

What changes for your team

When you move from chatbot support to agentic support, the role of your support engineers changes fundamentally.

Before: Engineers are first responders. They read every ticket, investigate every issue, and type every response. AI helps them type faster.

After: Engineers are exception handlers and knowledge curators. They handle the 10-20% of tickets that genuinely need human judgment. The rest of their time goes into improving the knowledge graph, adding new tools for agents, and tuning solution configurations.

This is not about replacing support engineers. It is about giving them leverage. One engineer with an agentic support platform can handle what used to take a team of ten, and the platform gets better every day.

The bottom line

Your AI support tool is probably a chatbot if it generates text but does not investigate, searches keywords but does not understand relationships, suggests but cannot act, and stays the same quality forever.

Agentic support is a different architecture, not a better prompt. It requires multi-agent orchestration, domain-scoped knowledge, and a solve-and-learn loop that compounds over time.

The chatbot era is ending. The agent era is here. The question is whether your support stack is ready for it.

More from the BuildWright team

View all posts →