AI Solved Coding. It Hasn't Touched Investigation.

Srikanth Gaddam

CEO & Co-founder · April 8, 2026 · 8 min read

Cursor raised $2.3 billion. Claude Code and Codex are growing faster than anyone predicted. Portfolio companies report engineers achieving 10-20x productivity gains with AI coding tools.

a16z just published their enterprise AI adoption data. The numbers are striking. 29% of Fortune 500 companies are live, paying customers of AI startups. Not piloting. Not evaluating. Paying.

But the most interesting finding isn't about coding. It's what comes second.

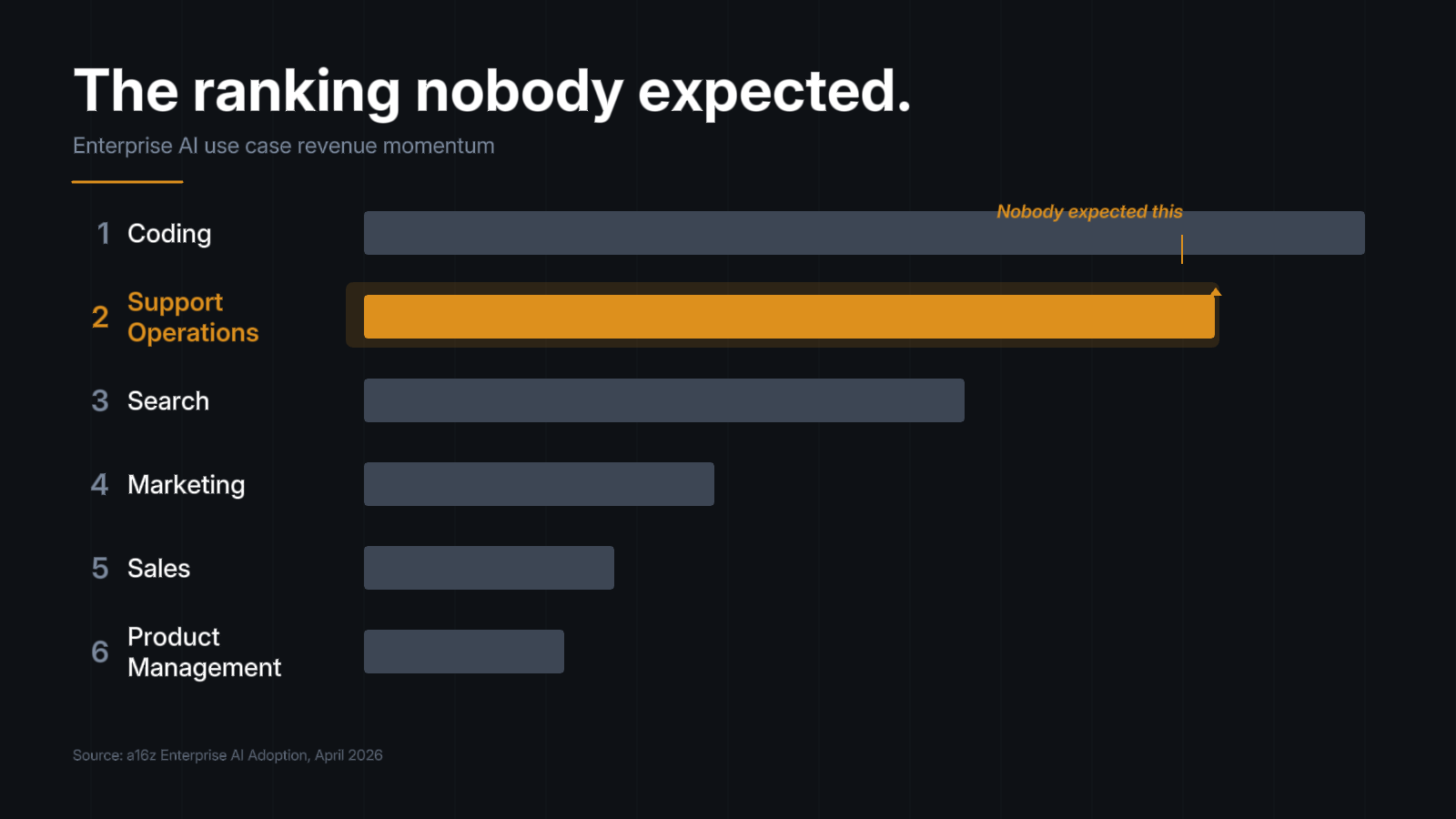

The ranking nobody expected

a16z ranked enterprise AI use cases by revenue momentum. Coding is first, by an order of magnitude. Search is third, dominated by ChatGPT and vertical players like Harvey in legal and Glean in enterprise.

Support operations is second.

Not marketing. Not sales. Not product management. Support.

a16z's reasoning: support work is text-based, follows standard operating procedures, produces quantifiable metrics like ticket resolution and CSAT scores, and has natural escalation paths to humans when the AI gets it wrong. It doesn't require 100% accuracy. It requires consistent accuracy with a clear fallback.

That checklist describes something very specific. Not the chatbot that deflects customer questions. Not the knowledge base search that surfaces help articles. The actual investigation underneath: an engineer tracing logs, querying databases, searching prior resolutions, correlating events across six different tools to figure out why a customer's data looks wrong.

That investigation is the bulk of every support ticket. Enterprise engineering leaders have measured it independently: roughly 70% of every ticket's resolution time goes to gathering context and finding the root cause. The remaining 30% is the actual fix.

An engineering manager tracked this precisely on their team. Mean time to resolution: 48 minutes. Actual debugging: 15 minutes. The rest was coordination and context gathering. Stripe's Developer Coefficient found engineers spend 42% of their time on maintenance and debugging. Cambridge University research puts average failure resolution at 13 hours, with 41% of developers citing bug reproduction as their primary barrier.

The problem is measured. The problem is large. And the AI hasn't arrived yet.

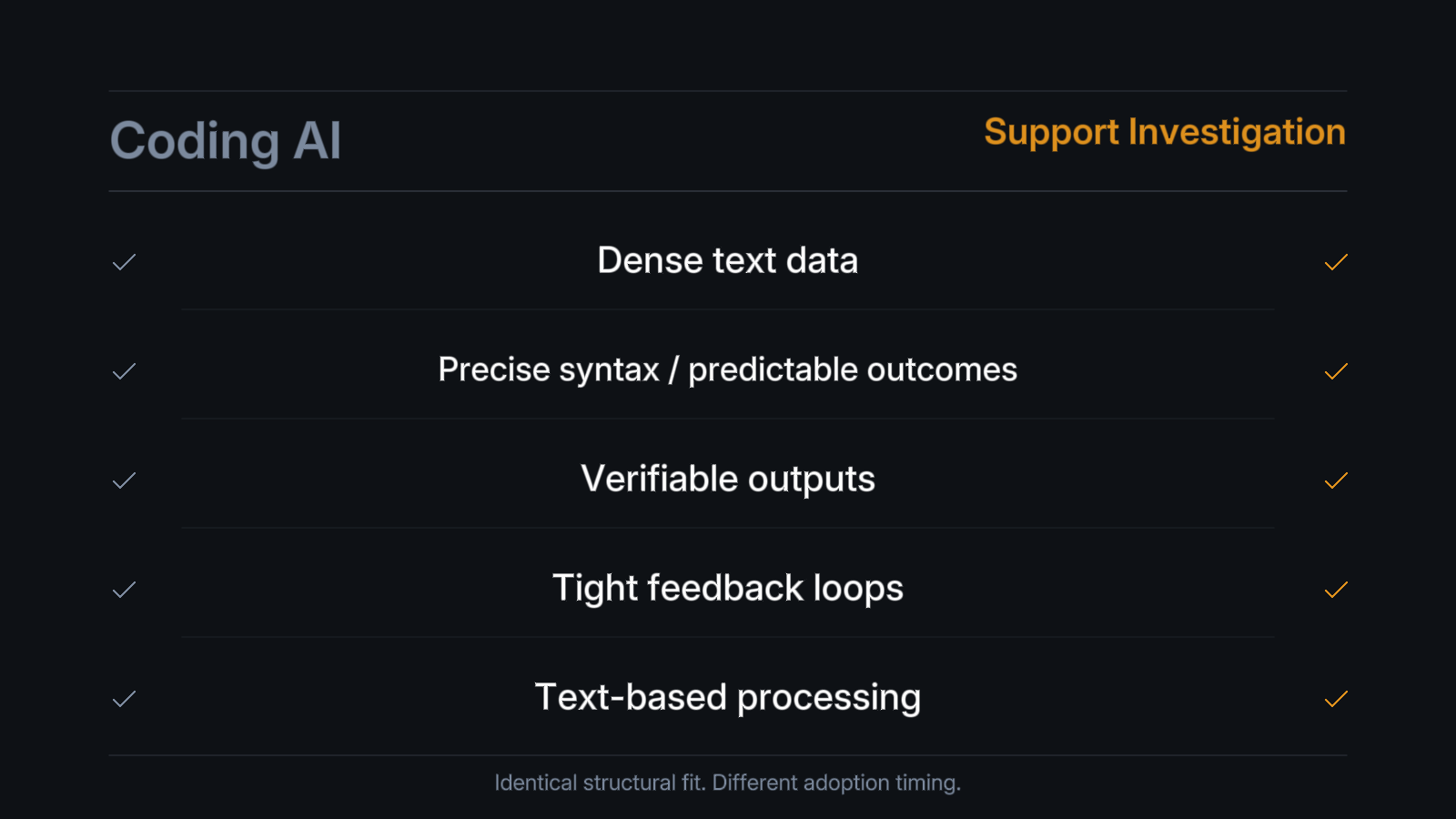

Why coding worked first

a16z's analysis of why coding AI succeeded is instructive. Not because coding is easy. Because coding has structural properties that make AI productive:

Dense data availability. Decades of open-source code to train on. Text-based processing. Precise syntax with predictable outcomes. Verifiable outputs. Code either runs or it doesn't. Tight feedback loops. Write, run, see the result.

Every one of these properties exists in support investigation. Ticket descriptions, error messages, stack traces, log entries, database schemas, code paths, prior resolution records. All text. Investigation outputs are verifiable. The root cause is either correct or it isn't. The feedback loop is immediate. An engineer reads the diagnosis and either acts on it or corrects it.

The structural fit is identical. The timing is different.

Coding AI had a head start because engineers are early adopters who choose their own tools. Support investigation requires a platform that integrates with the enterprise's ticketing system, codebase, observability stack, and databases. The integration surface is larger. The buyer is a VP Engineering with a procurement process, not an individual developer with a credit card.

But the structural suitability is the same. And the enterprise buyer is no longer resistant. 29% of Fortune 500 companies are already paying AI startups.

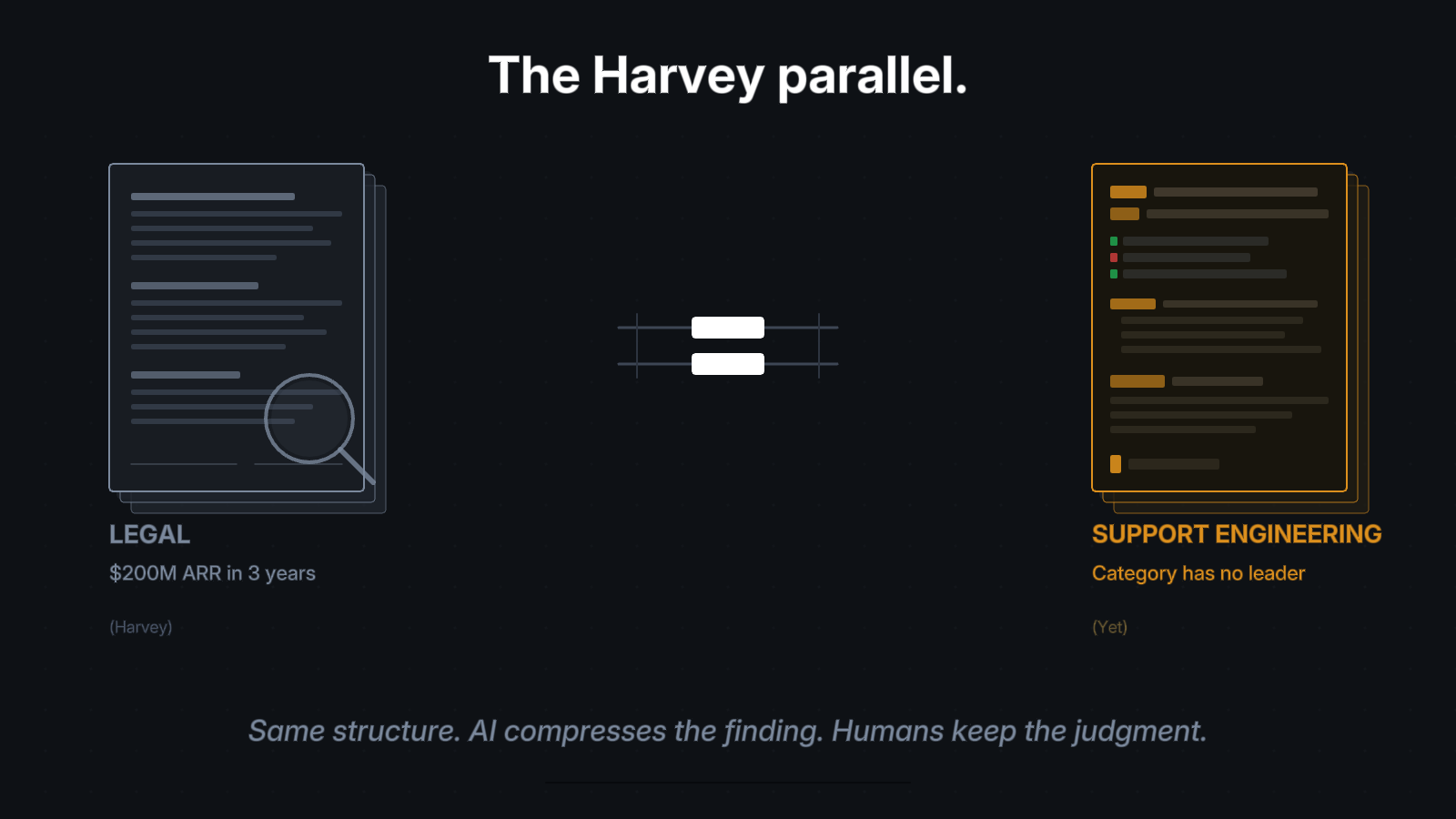

The Harvey parallel

The most useful analogy in a16z's data isn't coding. It's legal.

Harvey, a legal AI company, reached approximately $200 million in annualized recurring revenue within three years of founding. Eve, focused on plaintiff law, surpassed a billion-dollar valuation with more than 450 customers. These are historically software-resistant industries. Law firms don't typically adopt new technology quickly. The sales cycles are long. The buyers are conservative.

AI broke the pattern because the structural fit was undeniable. Lawyers spend most of their time on investigation: parsing dense text, reasoning over large document sets, finding precedent across fragmented sources. AI compressed the finding. Lawyers kept the judgment. Revenue increased because firms could process more cases.

Support engineering has the same structure. Engineers spend most of their time investigating: parsing logs, reasoning over codebases, finding relevant code paths and prior resolutions across fragmented tools. The fix itself is usually straightforward once the root cause is identified.

Harvey didn't just automate legal work. It automated legal investigation. The parallel to support engineering investigation is structural, not metaphorical.

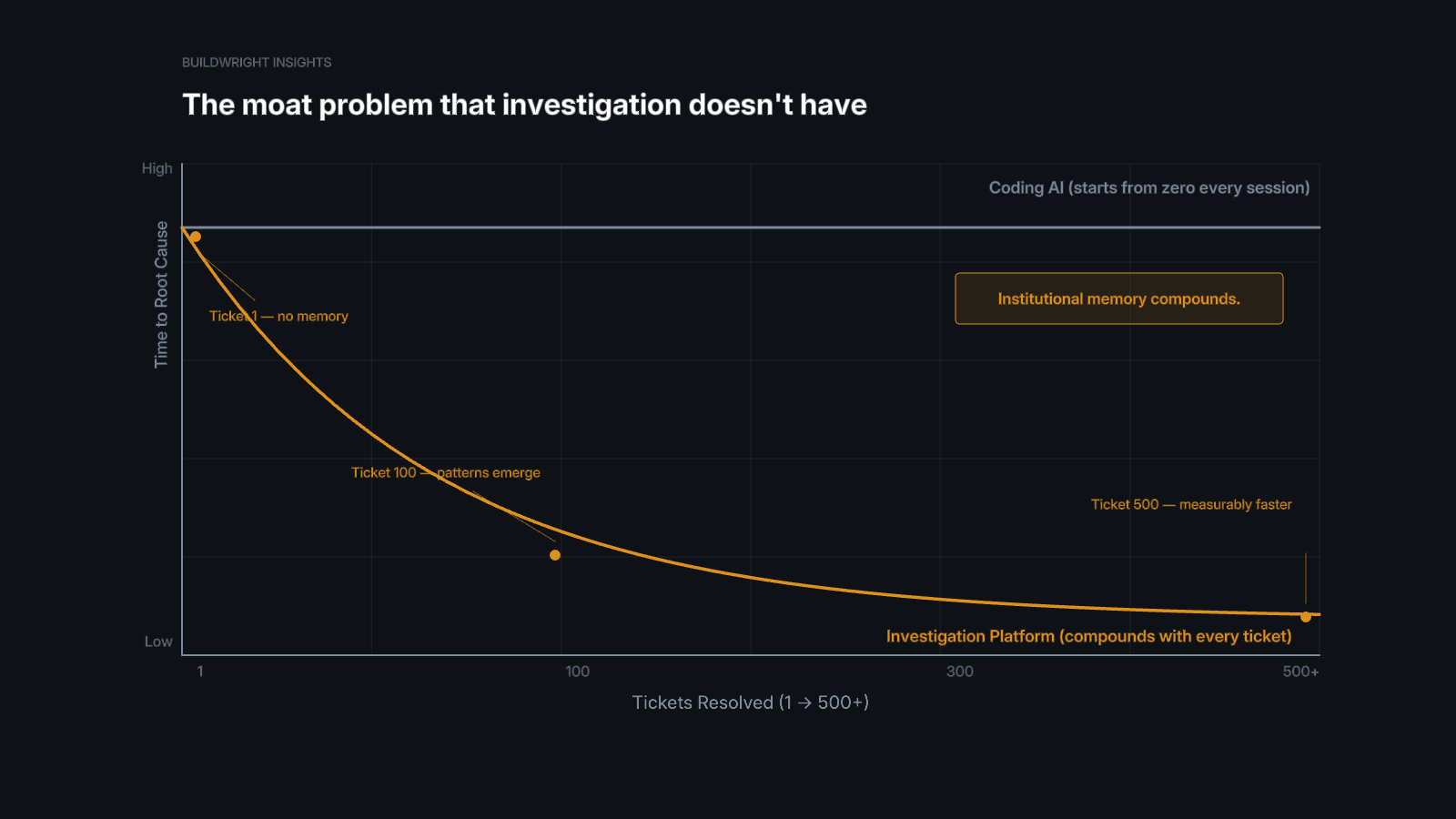

The moat problem that investigation doesn't have

a16z flags something important about coding AI: competitive advantages are hard to sustain. The work is accessible to any model. Code completion is increasingly commoditized. Cursor is exceptional, but the barrier to building a code completion tool is falling with every model release.

Investigation has a different dynamic.

Every investigation produces a trace. What evidence was gathered. What hypotheses were tested. What was ruled out. What led to the root cause. Which code paths mattered. Which logs correlated. How the team resolved a similar issue three months ago.

Those traces compound. The 500th investigation on a codebase is fundamentally easier than the first. The platform has seen the failure modes. It knows which services interact. It has searchable precedent from prior resolutions. Month 6 is measurably faster than Month 1.

Coding tools start from zero every session. You open a file, the AI assists, you close the file. Nothing persists. Nothing compounds.

Investigation platforms build institutional memory. The knowledge stays when engineers leave. The patterns transfer when teams change. The intelligence deepens with every ticket resolved.

This is the difference between a tool that augments individual productivity and a platform that compounds organizational intelligence. Both are valuable. Only one builds a durable asset.

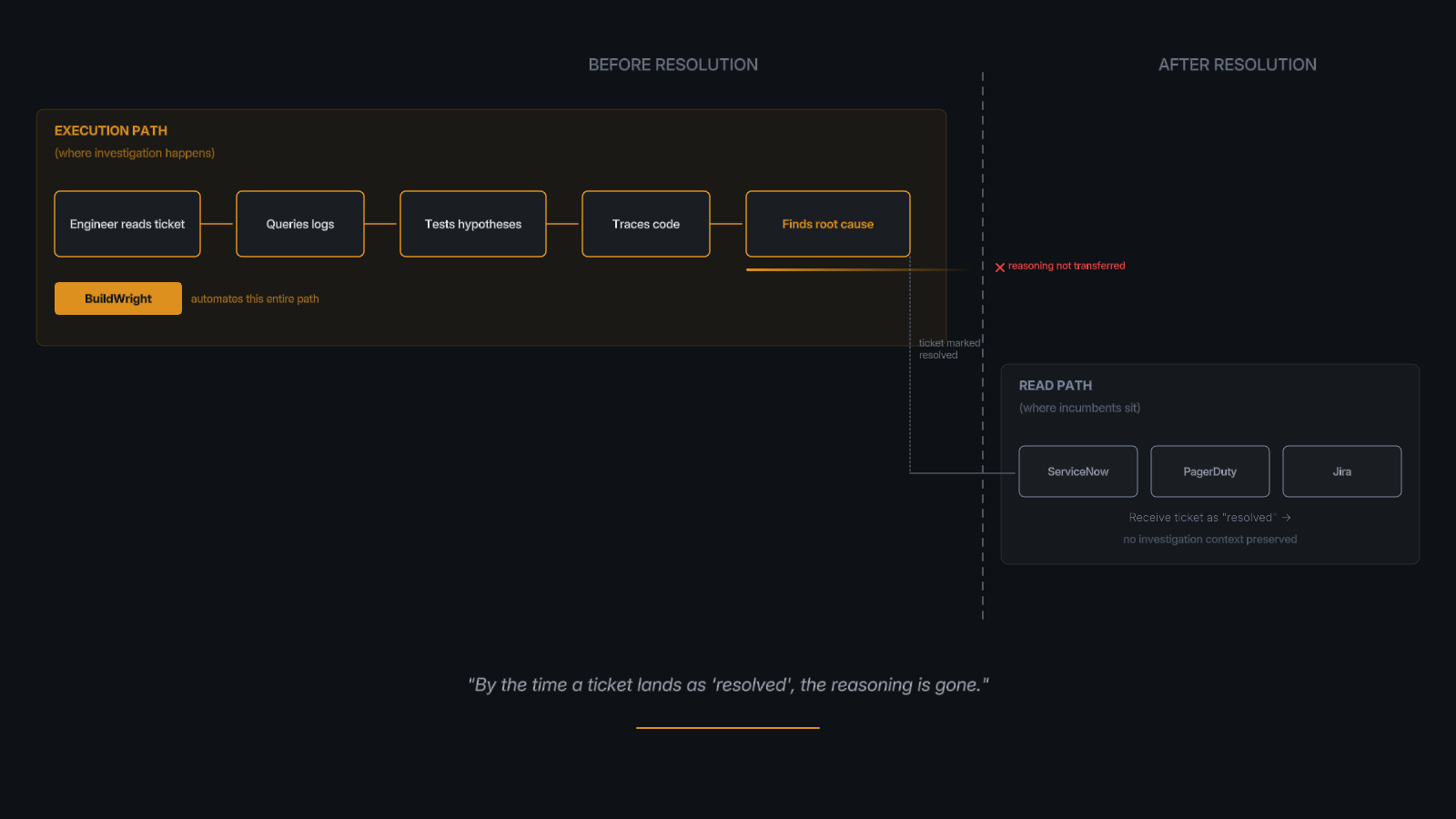

Jaya Gupta at Foundation Capital frames this as the difference between systems that capture what happened and systems that capture why decisions were made. Enterprise software has always stored the end state: the ticket is resolved, the incident is closed. It never stored the investigation reasoning that got there. The hypotheses tested. The code paths traced. The context that connected the customer's report to its root cause.

Consumer companies built trillion-dollar businesses by compounding behavioral traces. Enterprise software never had an equivalent compounding loop. Investigation traces are that loop for support engineering.

Why incumbents aren't building this

ServiceNow knows what the ticket looks like now. It doesn't know what the investigation looked like when the engineer was deciding where to look. PagerDuty knows which alert fired. It doesn't know which logs the engineer checked, which hypotheses were eliminated, or which prior tickets informed the diagnosis. By the time a ticket lands as "resolved" in any system of record, the investigation reasoning is gone.

The architectural gap is structural, not temporal. Incumbents are in the read path. They receive data after decisions are made. Investigation platforms sit in the execution path. They see the full context at decision time because they are performing the investigation.

You cannot build a compounding investigation intelligence layer by adding AI to a system that only stores current state. The knowledge that matters, the reasoning that connects symptoms to root causes, is never captured in systems designed to track tickets, route alerts, or manage workflows.

This is also why AI coding tools can't solve it. Cursor and Claude Code are extraordinary at helping an engineer investigate a single issue in a single session. But they start from zero every time. They don't remember what they learned from the last 500 tickets. They don't know which engineer fixed the same issue last quarter. They don't automatically map service dependencies from prior investigations. Investigation at the scale of an enterprise support organization, thousands of tickets per month across dozens of services, requires a platform, not a tool.

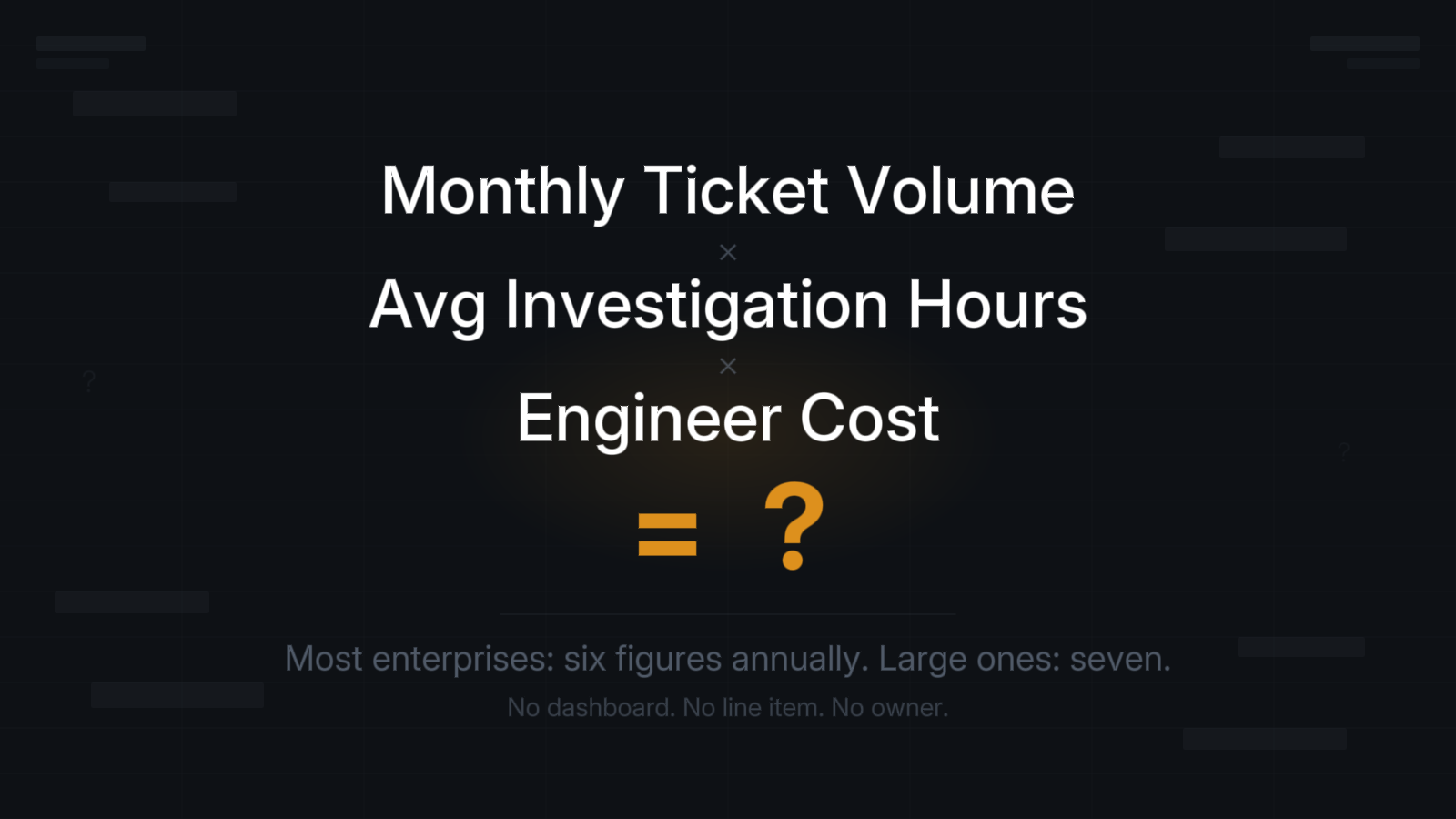

The number every enterprise has but hasn't calculated

a16z's data confirms what practitioners have been measuring quietly for years. Support investigation is one of the largest, most structurally suitable AI use cases in enterprise software. The models are capable. The enterprise buyer is ready. The structural fit is verified.

The category has no dominant player.

Every enterprise has a number. Monthly ticket volume multiplied by average investigation hours multiplied by engineer cost. For most organizations, it's six figures annually. For large ones, seven.

Most have never calculated it. The cost hides inside engineering headcount. It has no dashboard. It has no line item. It has no owner.

But the waste is real. The structural fit for AI is real. And the market is waiting for the platform that names the problem and solves it.

The investigation gap is closing. The question is who closes it first.

Srikanth Gaddam is the founder of BuildWright, where he works on compressing enterprise support investigation from hours to minutes.

More from the BuildWright team

View all posts →