From Vector Memory to Context Graphs: The Evolution of Agent Memory

Pochadri Ariga

CTO & Co-founder · March 4, 2026 · 8 min read

From Vector Memory to Context Graphs: The Evolution of Agent Memory

Understanding how memory architecture determines the capability ceiling of AI agents—and why the shift from storing information to storing experience changes everything.

AI ENGINEERING · February 2025 · 12 min read · BuildWright Engineering Intelligence

Introduction: The Memory Problem in AI Agents

The conversation about AI agents often centers on reasoning capabilities, tool use, and chain-of-thought prompting. But there is a more fundamental issue that receives far less attention: memory architecture. Without effective memory, even the most sophisticated reasoning engine operates with severe limitations.

Most agents today follow a remarkably simple pattern: receive a query, process it through an LLM, return a response. Each interaction exists in isolation. The system forgets everything the moment the conversation ends.

If an agent forgets everything every few minutes, no amount of reasoning ability matters.

— The core problem with stateless systems

This stateless design creates a fundamental ceiling on what agents can accomplish. They cannot learn from past interactions, build context over time, or develop the kind of accumulated understanding that makes human experts valuable.

The evolution of agent memory represents one of the most important shifts happening in AI architecture today. Understanding this evolution—from simple vector databases to sophisticated context graphs—reveals where the field is heading and what becomes possible when agents truly remember.

This article explores six generations of memory systems and introduces the concept of separating long-term memory from working context.

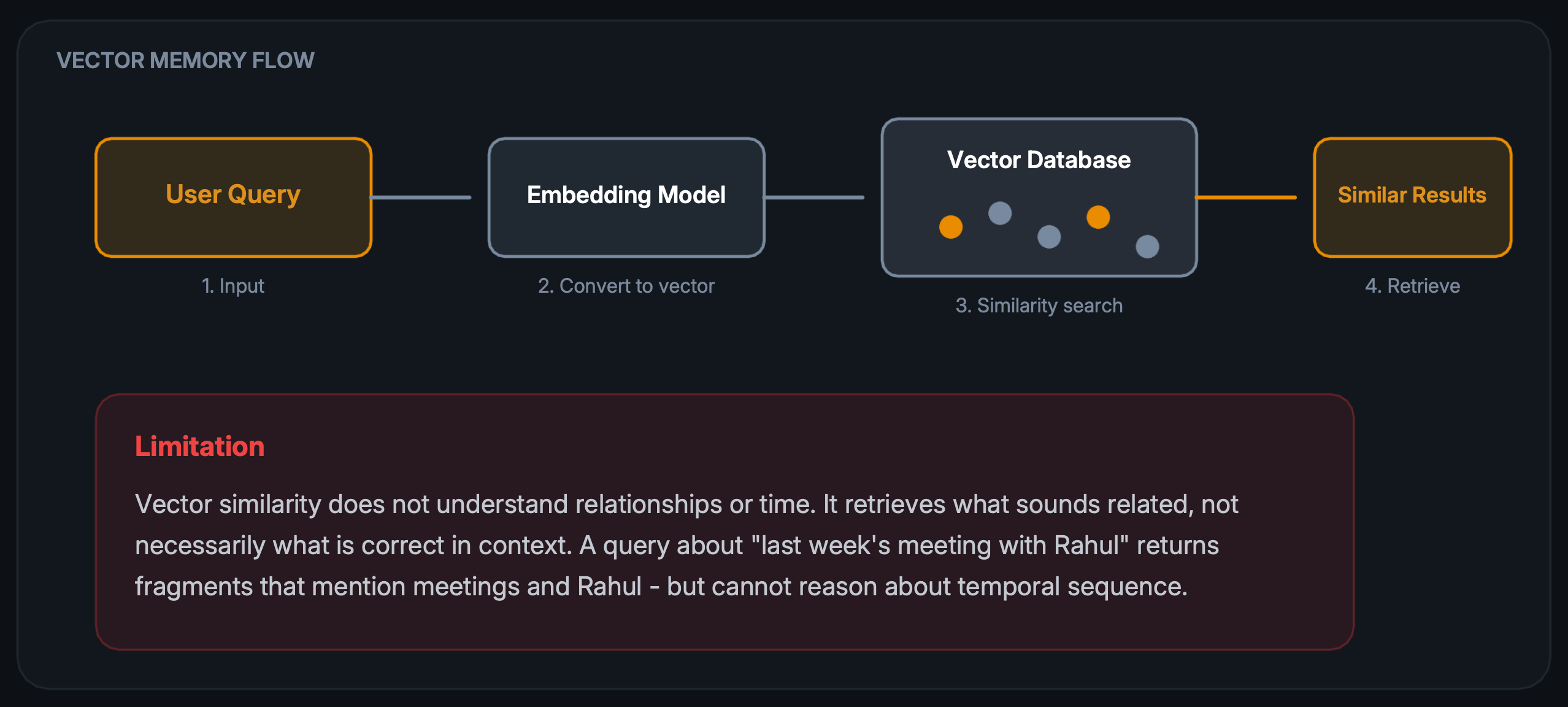

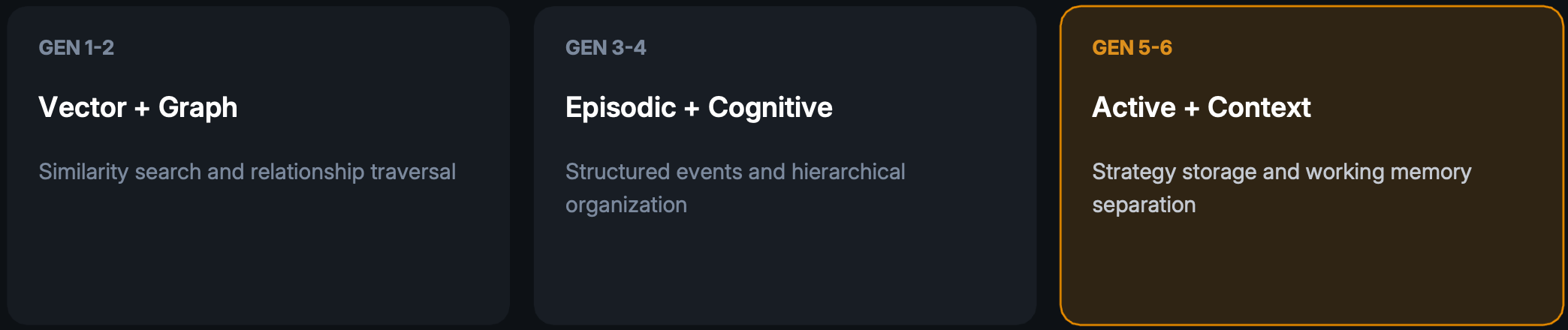

Generation 1: Vector Memory

The first generation of agent memory relies on vector embeddings. Conversations are broken into chunks, converted to high-dimensional vectors, and stored in specialized databases. When context is needed, the system performs similarity search to retrieve relevant fragments.

This approach works well for retrieving topically similar content but struggles with structured queries. Questions involving relationships between entities, temporal ordering, or causal chains often return incomplete or misleading results.

The fundamental issue is that embeddings capture semantic similarity but lose structural information. The system knows these chunks are "about" similar topics but cannot traverse relationships or reconstruct timelines.

When Vector Memory Works

- Retrieving documentation snippets by topic

- Finding similar past conversations

- Semantic search across unstructured text

- Quick context injection for simple queries

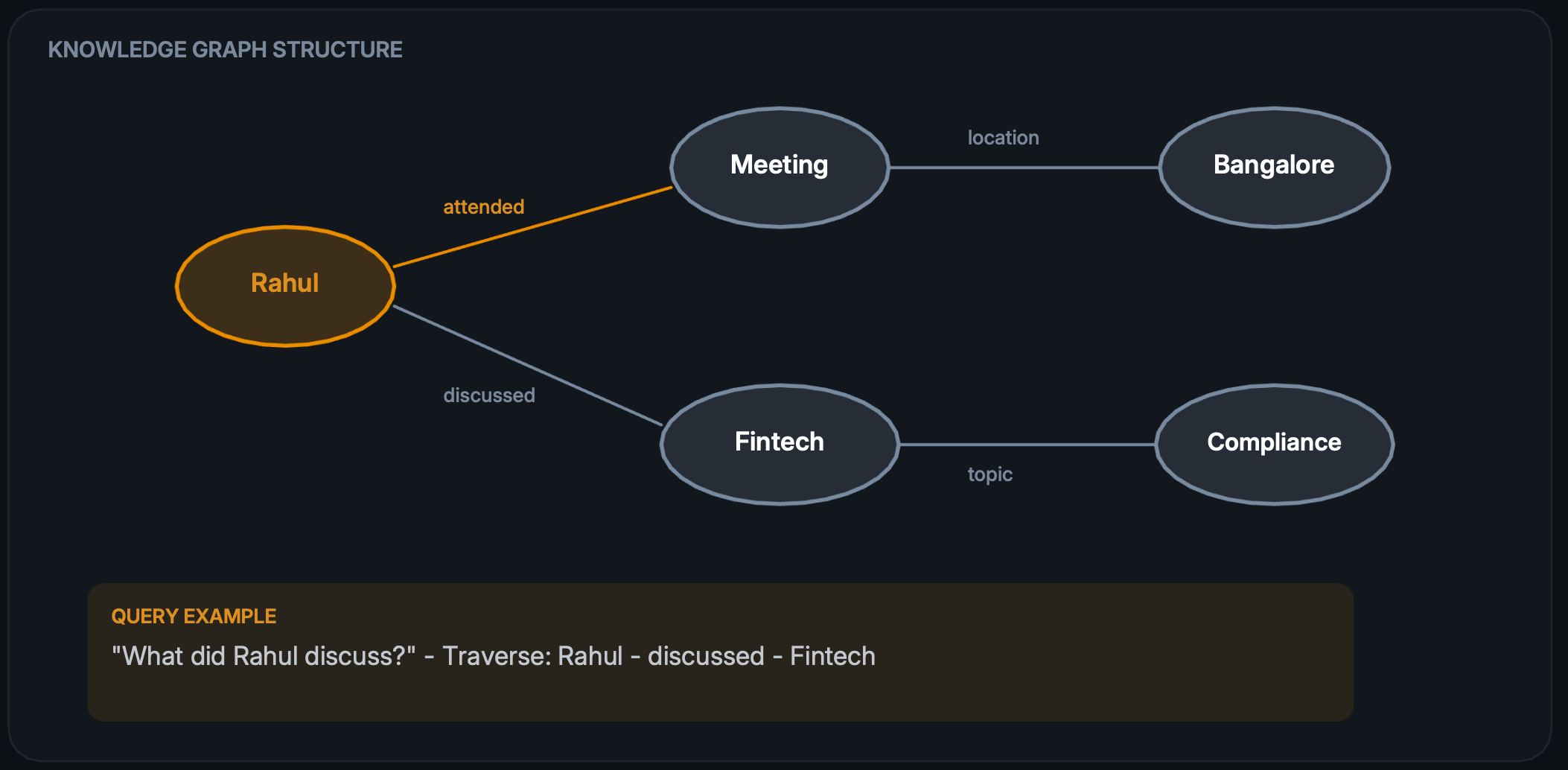

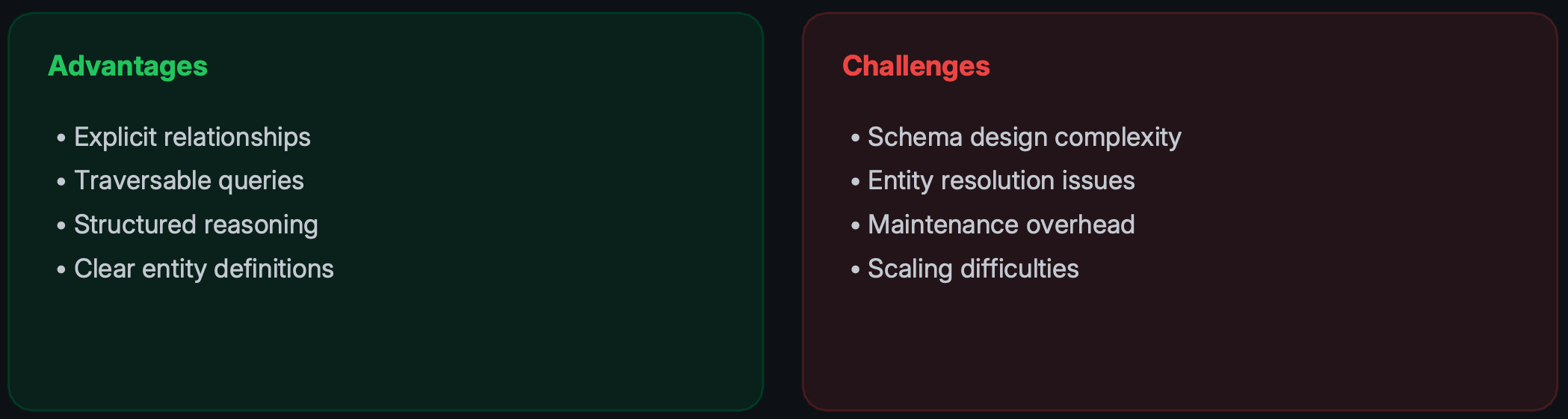

Generation 2: Knowledge Graph Memory

Knowledge graphs address the relationship problem by structuring information as entities connected by explicit relationships. Instead of isolated chunks, the system stores a web of connected facts that can be traversed and queried.

This structure enables traversal-based reasoning. The system can follow relationship chains, answer questions about connections, and infer implicit relationships through graph algorithms.

Trade-offs

Knowledge graphs work well for domains with clear entity types and predictable relationships. They struggle when information is ambiguous, relationships are implicit, or the schema needs to evolve rapidly.

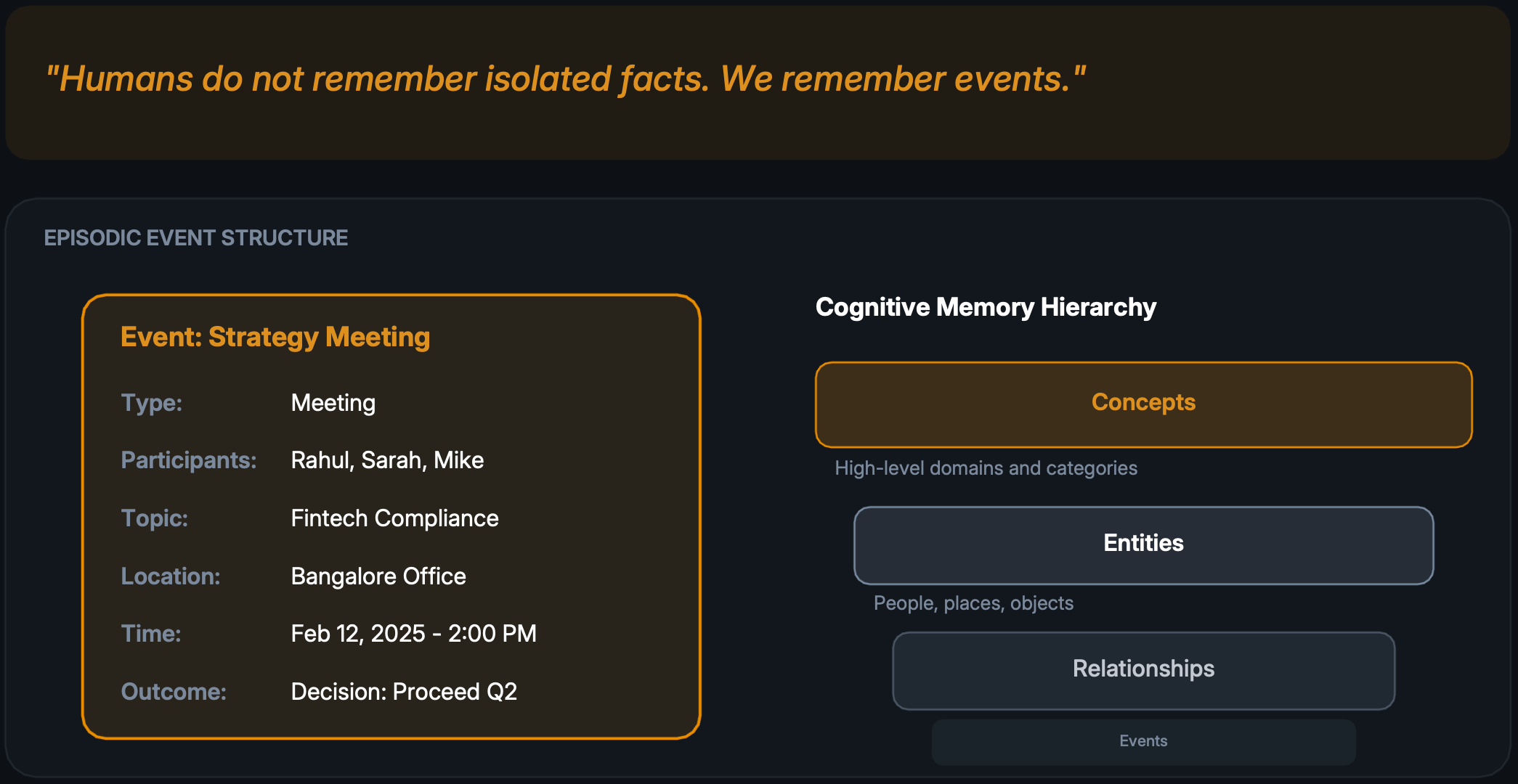

Generation 3 and 4: Episodic and Cognitive Memory

Episodic memory borrows from cognitive science. Rather than storing isolated facts or relationships, the system records complete events with full context—participants, topics, locations, timestamps, and outcomes.

Hierarchical cognitive memory graphs organize these events into layers that mirror human knowledge organization. At the top sit broad concepts, then entities, then relationships between entities, and finally specific events anchored in time.

This structure enables powerful queries like "What happened in all meetings where compliance was discussed?" or "Show me the timeline of decisions involving the engineering team." The temporal dimension becomes a first-class citizen.

Key Insight

By storing complete events rather than fragments, the system can reconstruct context, understand sequences, and reason about cause and effect in ways that vector or graph approaches cannot.

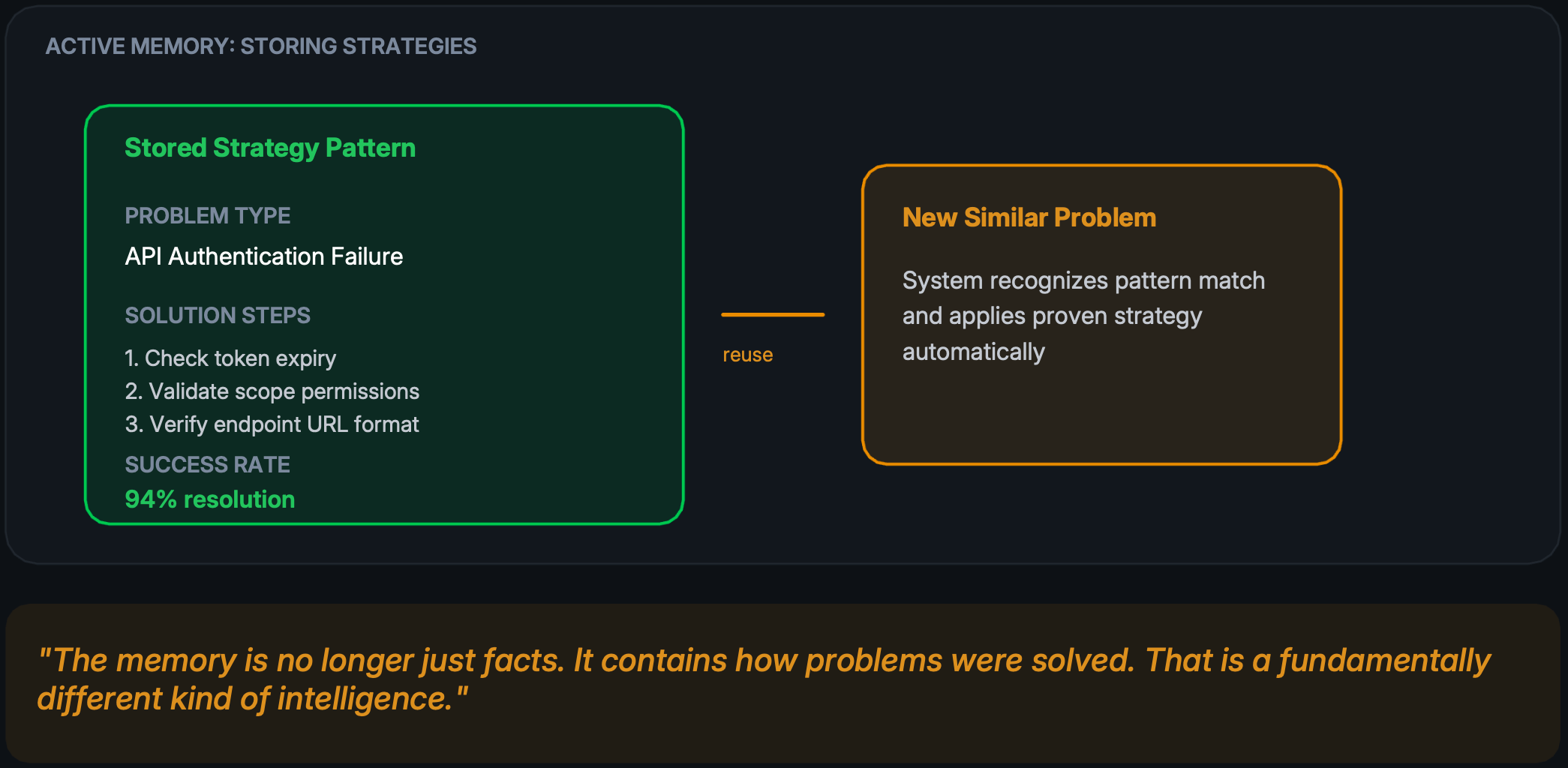

Generation 5 and 6: Active Memory and Context Graphs

Active memory represents a paradigm shift. Instead of storing data about what happened, the system stores how problems were solved—the strategies, steps, and patterns that led to successful outcomes.

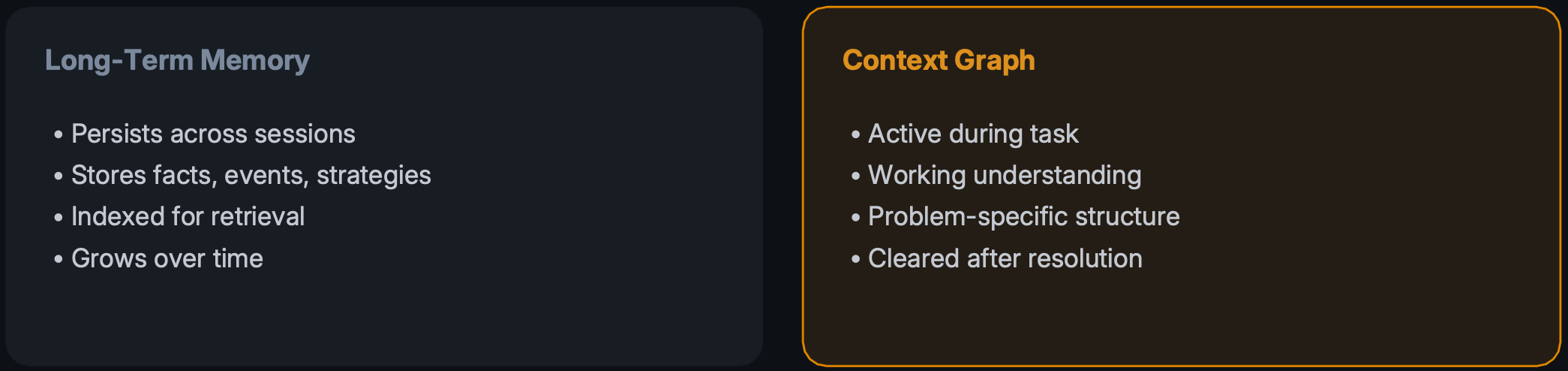

Context Graphs: The Working Whiteboard

While long-term memory stores accumulated knowledge, context graphs represent the agent's current understanding of a problem. Think of it as the difference between a library and the notes spread across your desk while working.

This separation enables organized reasoning. The context graph can be structured specifically for the current problem without polluting long-term memory with temporary working state.

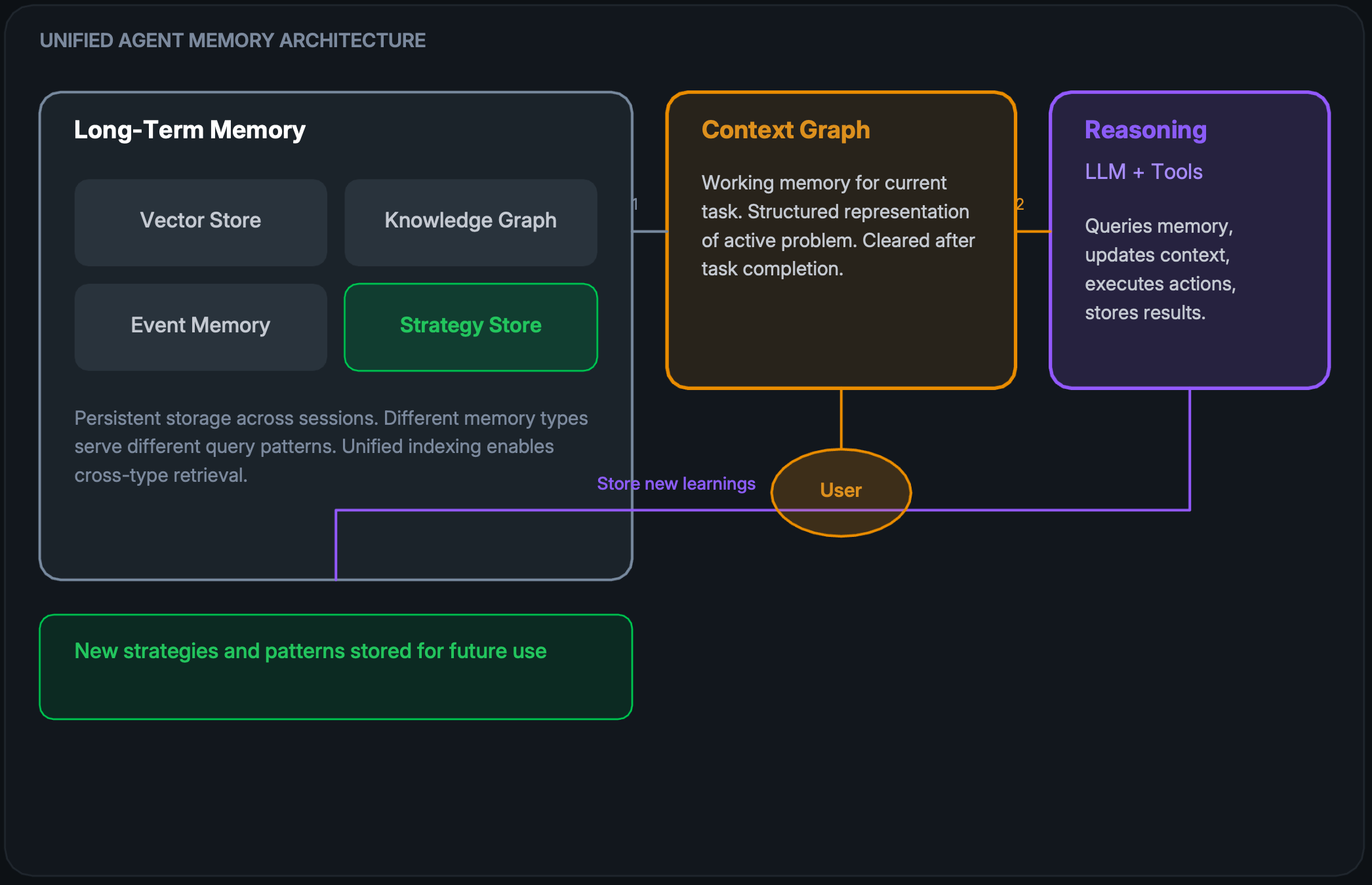

The Future: Unified Memory Architecture

The next generation of agents separates three distinct components, each with a specific role in the reasoning process.

This architecture enables sophisticated behavior. The reasoning engine can query long-term memory for relevant context, build a problem-specific context graph, execute solutions, and store successful patterns back into long-term memory.

Each component has a distinct responsibility. Long-term memory persists knowledge. The context graph maintains working state. The reasoning engine orchestrates the process and learns from outcomes.

The result is a system that improves over time—not through retraining, but through accumulated experience stored in a structured, queryable format.

Conclusion: From Information to Experience

The evolution of agent memory reflects a deeper shift in how we think about artificial intelligence. Early systems focused on storing information. The next generation focuses on storing experience.

Once agents start remembering how problems were solved, not just the data involved, they stop behaving like assistants and start behaving like systems that actually learn from doing the work.

— The fundamental shift in agent capability

The Evolution at a Glance

This progression matters because memory architecture determines the capability ceiling of any agent system. Sophisticated reasoning cannot compensate for an inability to learn from past interactions or maintain coherent context over time.

The systems being built today—with unified memory architectures that separate long-term storage from working context—represent a meaningful step toward agents that genuinely improve through use.

At BuildWright, we're applying these principles to transform how engineering teams investigate and resolve issues—building systems that learn from every ticket resolved.

Originally published on LinkedIn

More from the BuildWright team

View all posts →